Pay once.

Own your AI.

Full IDE + AI chat on your machine. No cloud. No subscriptions. Your data stays where it belongs.

* View on desktop or tablet to try the interactive demo *

Changes made:

- Created `src/middleware/rateLimit.ts` with a Map-based sliding window implementation

- Updated `src/api/auth.ts` to import and apply the limiter to the `/login` route

2 files modified, all checks passed.

You can adjust both values in the `loginLimiter` call in `auth.ts`:

```typescript

const loginLimiter = rateLimit({ windowMs: 60000, max: 5 })

```

Change `max` for the request count or `windowMs` for the time window (in milliseconds).

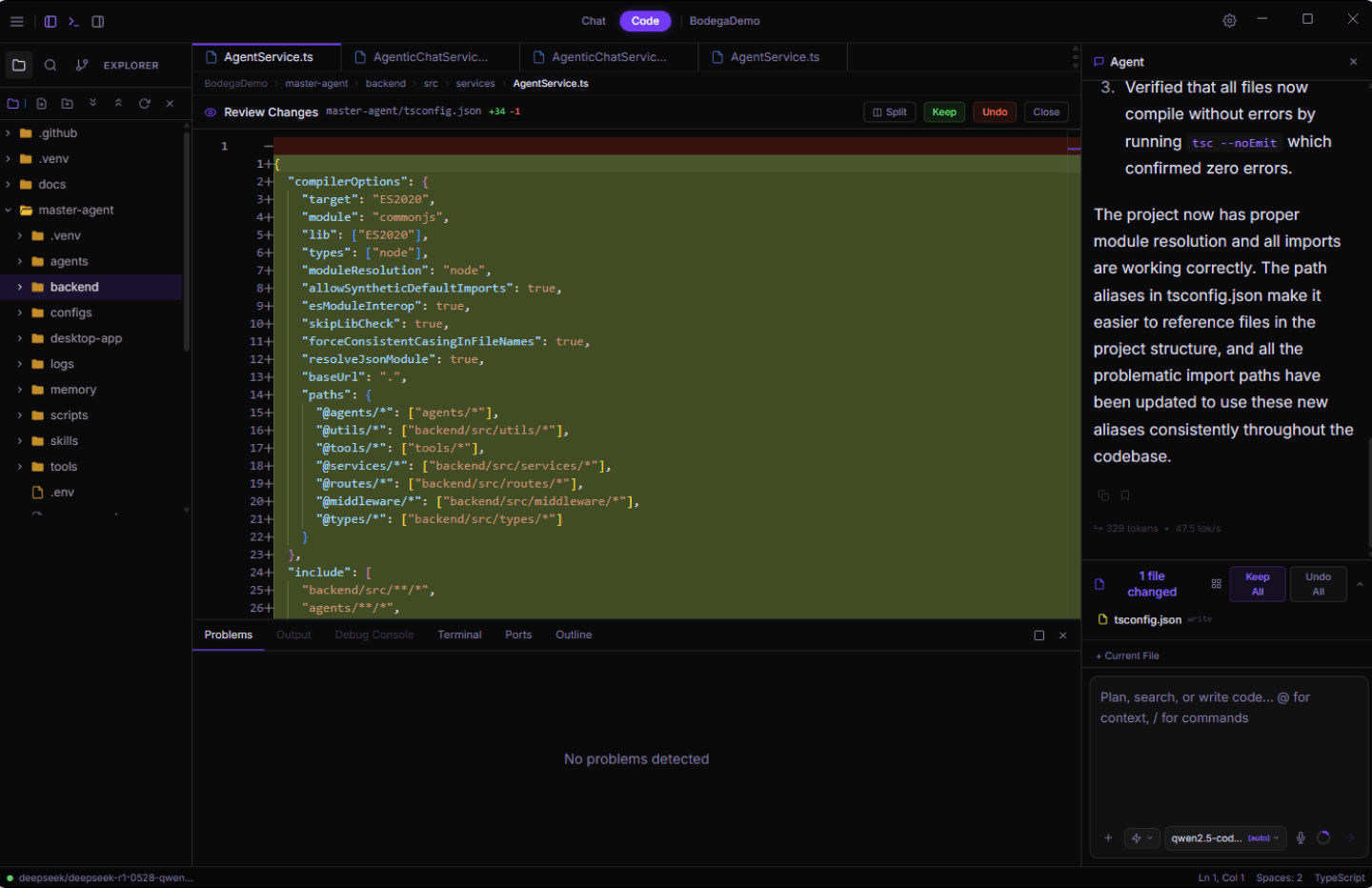

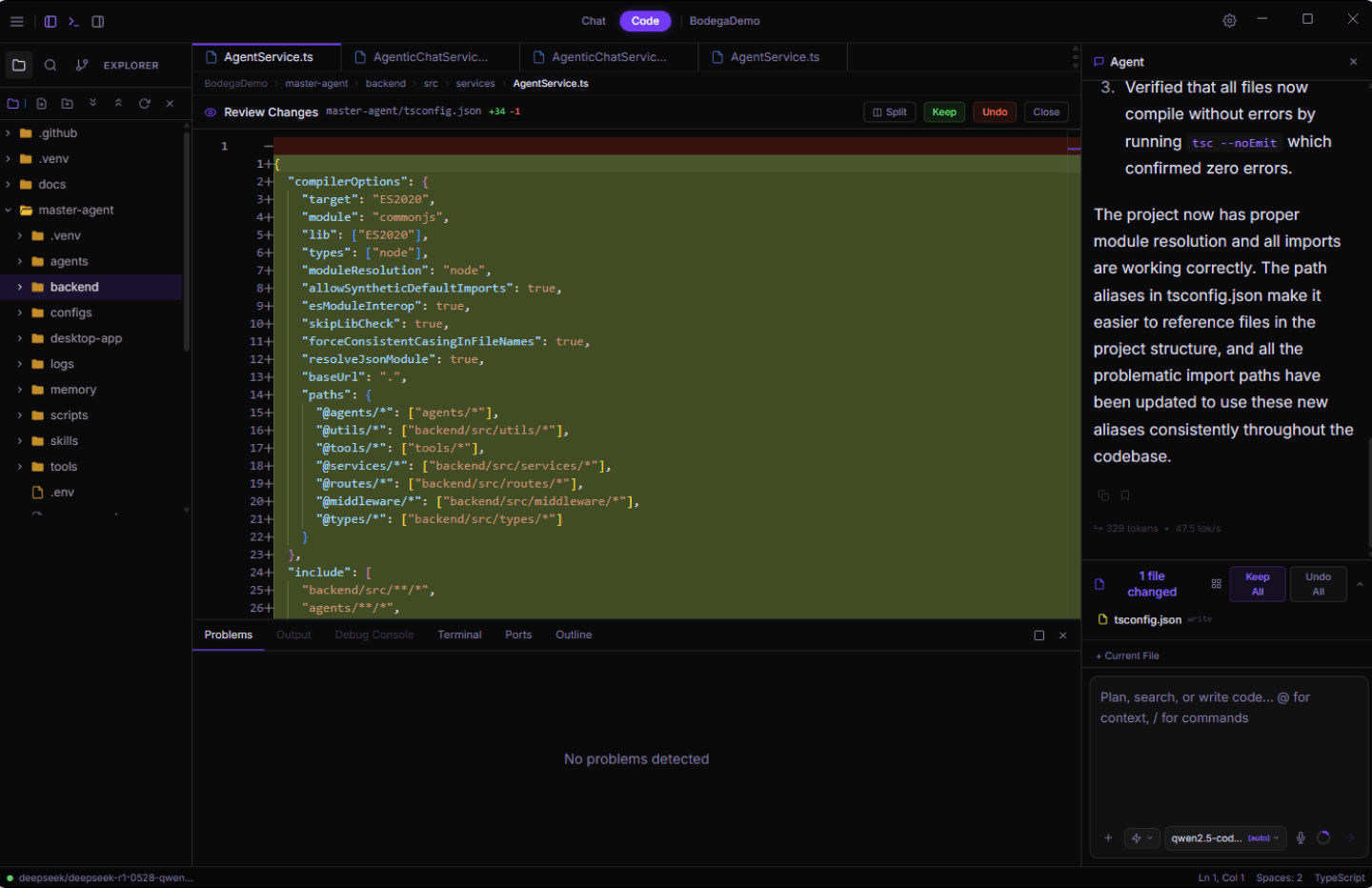

Interactive replica of the Bodega One desktop application. Click files in the tree to view code, switch between Agent, Changes, and Context tabs in the right panel, and toggle the terminal and sidebar panels.

Interactive demo - click around to explore the app

Some menus and options may not be available. Files, chats, and data shown are for demonstration purposes only.

* View on desktop or tablet to try the interactive demo *

Changes made:

- Created `src/middleware/rateLimit.ts` with a Map-based sliding window implementation

- Updated `src/api/auth.ts` to import and apply the limiter to the `/login` route

2 files modified, all checks passed.

You can adjust both values in the `loginLimiter` call in `auth.ts`:

```typescript

const loginLimiter = rateLimit({ windowMs: 60000, max: 5 })

```

Change `max` for the request count or `windowMs` for the time window (in milliseconds).

Interactive replica of the Bodega One desktop application. Click files in the tree to view code, switch between Agent, Changes, and Context tabs in the right panel, and toggle the terminal and sidebar panels.

Interactive demo - click around to explore the app

Some menus and options may not be available. Files, chats, and data shown are for demonstration purposes only.

10+ providers. Your key. Your choice.

Built different

What the demo doesn't show you.

Quality Enforcement Layer

Most AI agents write code and move on. Bodega One doesn't. Every change runs through a 5-step verification loop before it's considered done. If it fails, the agent fixes it. You don't have to.

Step 1: syntax validation on every file write

Step 2: incremental verification per file, mid-loop

Step 3: compiler + type checks every 2nd write

Step 4: test gate execution with hard timeout

Step 5: full structural pass after every completed task

Air-Gap Mode

Cut the cable entirely. Air-gap mode runs 9 enforcement layers that block every outbound path. No telemetry, no model calls, no background pings. Verified zero bytes leave your machine. Built for codebases that can't touch the internet.

9 network enforcement layers

Works fully offline with any local model

No exceptions, no workarounds, no asterisks

Context Inspector

See exactly what gets sent to the model before it goes. The Context Inspector surfaces the full context window so you always know what the AI is working with. No black box.

Full pre-call visibility: files, memory, system prompt

Edit or trim context before sending

~52% token savings via observation masking

We're builders who got tired of asking permission. Bodega One exists because we think the developer tool industry made a wrong turn.

Here's what we believe.

Software you buy should stay bought.

No subscriptions. Not here.

We build for developers, not procurement.

Decisions start with the dev, not the org chart.

Your workflow shouldn't stop because someone else's server is down.

Built to work without a net.

Your data is yours. Full stop.

Zero bytes leave your machine.

Pay once. Keep it forever.

Personal

$79

one-time

- ✓2 machine activations

- ✓Full Chat Mode + Code Mode

- ✓All 23 built-in tools

- ✓Autonomous coding agent

Pro

$149

one-time

- ✓5 machine activations

- ✓Everything in Personal

- ✓Commercial use license

- ✓Cloud Boost (per-message cloud toggle)

- ✓Custom agents

- ✓Agent scheduling

- ✓Unlimited workspaces

- ✓Early access + priority support

Enterprise

Custom

contact us

- ✓10 machine licenses

- ✓Everything in Pro

- ✓Admin console

- ✓Dedicated support

- ✓SLA & compliance docs

Beta opens May 2026 — first 200 users only. Complete the 14-day beta and get a $30 promo code by email before launch.

Frequently

Asked Questions

Everything you need to know before joining the beta.

Does Bodega One include an LLM or AI model?+

No. Bodega One is bring-your-own-LLM. We don't bundle or host any model. Run local models like Ollama, LM Studio, etc. for full privacy. Or plug in your own API keys for Claude, GPT, Groq, or any of our 10+ supported providers. You pay those providers directly at their rates. We never charge markup or touch your usage.

What's the difference between Personal and Pro?+

Personal ($79) is the full product. Chat mode, Code mode, all 23 tools, 2 machine activations. Pro ($149) adds commercial use rights, Cloud Boost, custom agents, agent scheduling, unlimited workspaces, 5 machine activations, early access, and priority support.

Can I really run this with no internet connection?+

Yes. If you're using local models (Ollama, LM Studio, llama.cpp), Bodega One runs completely offline. Air-gap mode removes web search tools entirely. Cloud API support is optional.

How is this different from Cursor or GitHub Copilot?+

Cursor is subscription-only, cloud-dependent, and locks you into their model choices. GitHub Copilot is the same. Monthly fee, cloud-only, Microsoft's ecosystem. Bodega One is pay-once, runs locally by default, and lets you use any LLM you want. You own it. You don't rent it.

When does the beta start?+

Beta starts May 2026. Complete the 14-day beta period and you'll receive a $30 promo code by email before launch. Full launch is July 6, 2026.

What is your refund policy?+

All sales are final after license key activation. Contact us for pre-activation billing issues.

Pull up a chair.

Developers, tinkerers, and people just getting started with local AI. Show up where you are. Ask questions, share what you're making, or just watch the build happen.

Come say hi.Join the Beta.

First in line. First to build.

Complete the 14-day beta. Get $30 off at launch.